## Summary Lambda warm-invocations of logic functions were spending **~440 ms** re-parsing and re-evaluating the user bundle on every call. The executor wrote the user code to a **randomly-named** temp file and `import()`-ed it, so each warm call resolved to a new URL and Node's ESM cache could never reuse the previous module record. This PR makes the executor write to a **content-hash filename**, skip the write when the file already exists, and stop deleting it. Identical code now reuses the same module record across warm calls in the same container, dropping warm-invocation overhead by **~30–40%**. ## What changed - `executor/index.mjs`: temp filename derived from `sha256(code)`, write skipped when file exists, no `fs.rm` on cleanup. - `lambda.driver.ts`: single structured `[lambda-timing]` log per invocation with `totalMs / buildExecutorMs / getBuiltCodeMs / payloadBytes / invokeSendMs / reportDurationMs / billedMs / initDurationMs / coldStart`. Goes through the standard NestJS `Logger`. No behavioural change for callers: same input → same output, same error semantics. ### Caveat: module-scope state now persists across warm calls With a stable filename, the user bundle is evaluated **once per warm container**. Any module-scoped state or top-level side-effects in user code are now shared across invocations of the same container, instead of re-running on every call. This is documented in the executor and is the intended trade-off — module scope should be treated as a per-container cache, not as per-call isolation. ## Findings — measured impact Same logic function (`fetch-prs`, ~12k PRs to page through), same workspace, same Lambda config (eu-west-3, 512 MB), token cache primed. ### Warm invocations | Phase | Before fix | After fix | Δ | | -------------------------------------- | ------------- | -------------- | ------------ | | Executor `import(userBundle)` | ~440 ms | **~0 ms** | **-440 ms** | | Lambda billed duration | ~1.5–1.7 s | **~1.0–1.1 s** | **~30–40%** | | Server-perceived round-trip | ~1.7–2.0 s | **~1.0–1.2 s** | **~30–40%** | ### Cold starts Unchanged — the cache helps subsequent warm calls in the same container, not the first one. Init Duration stays ~130–170 ms; total cold call ~2.5–3.0 s. ### Stress Could not reproduce the previously-reported \"every ~10th call times out\" behaviour after the fix: - 30 sequential calls: max 1.7 s, median ~1.1 s, 0 timeouts - 50 concurrent calls: max 9.4 s (clear cold-start cluster), median ~1.5 s, 0 timeouts Hypothesis: the warm-import overhead was eating into the headroom against the function timeout under bursty load; removing it pushed everything well below the limit. ## Observability One structured log line per invocation, sent through the standard NestJS logger: \`\`\` [lambda-timing] fnId=abc123 totalMs=1187 buildExecutorMs=2 getBuiltCodeMs=3 payloadBytes=1466321 invokeSendMs=1180 reportDurationMs=992 billedMs=1000 initDurationMs=n/a coldStart=false \`\`\` \`coldStart=true\` whenever Lambda spun up a fresh container; on warm calls \`buildExecutorMs\` and \`getBuiltCodeMs\` collapse to single-digit ms, confirming the cache fix is working. ## Test plan - [ ] CI green. - [ ] Deploy to a Lambda-backed env, trigger a logic function several times in a row. - [ ] Confirm \`[lambda-timing]\` warm invocations show \`totalMs\` ~30–40% lower than before, and \`coldStart=false\` after the first call in a container. - [ ] Push a new version of an app; confirm the next call shows higher \`buildExecutorMs\` (new hash, new file written) followed by warm calls again. - [ ] Smoke test: errors thrown by the user handler are still surfaced correctly. Made with [Cursor](https://cursor.com)

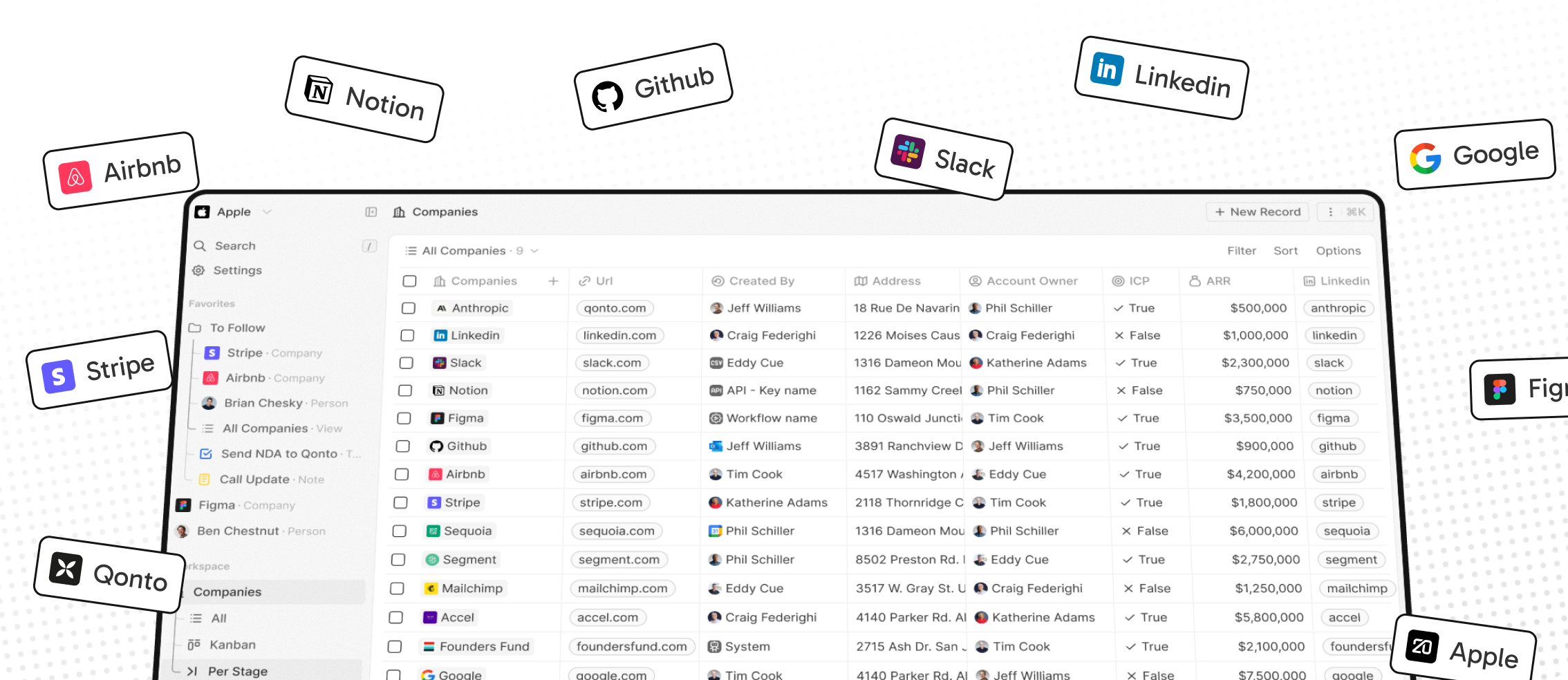

The #1 Open-Source CRM

🌐 Website · 📚 Documentation · Roadmap ·

Discord ·

![]() Figma

Figma

Installation

See: 🚀 Self-hosting 🖥️ Local Setup

Why Twenty

We built Twenty for three reasons:

CRMs are too expensive, and users are trapped. Companies use locked-in customer data to hike prices. It shouldn't be that way.

A fresh start is required to build a better experience. We can learn from past mistakes and craft a cohesive experience inspired by new UX patterns from tools like Notion, Airtable or Linear.

We believe in open-source and community. Hundreds of developers are already building Twenty together. Once we have plugin capabilities, a whole ecosystem will grow around it.

What You Can Do With Twenty

Please feel free to flag any specific needs you have by creating an issue.

Below are a few features we have implemented to date:

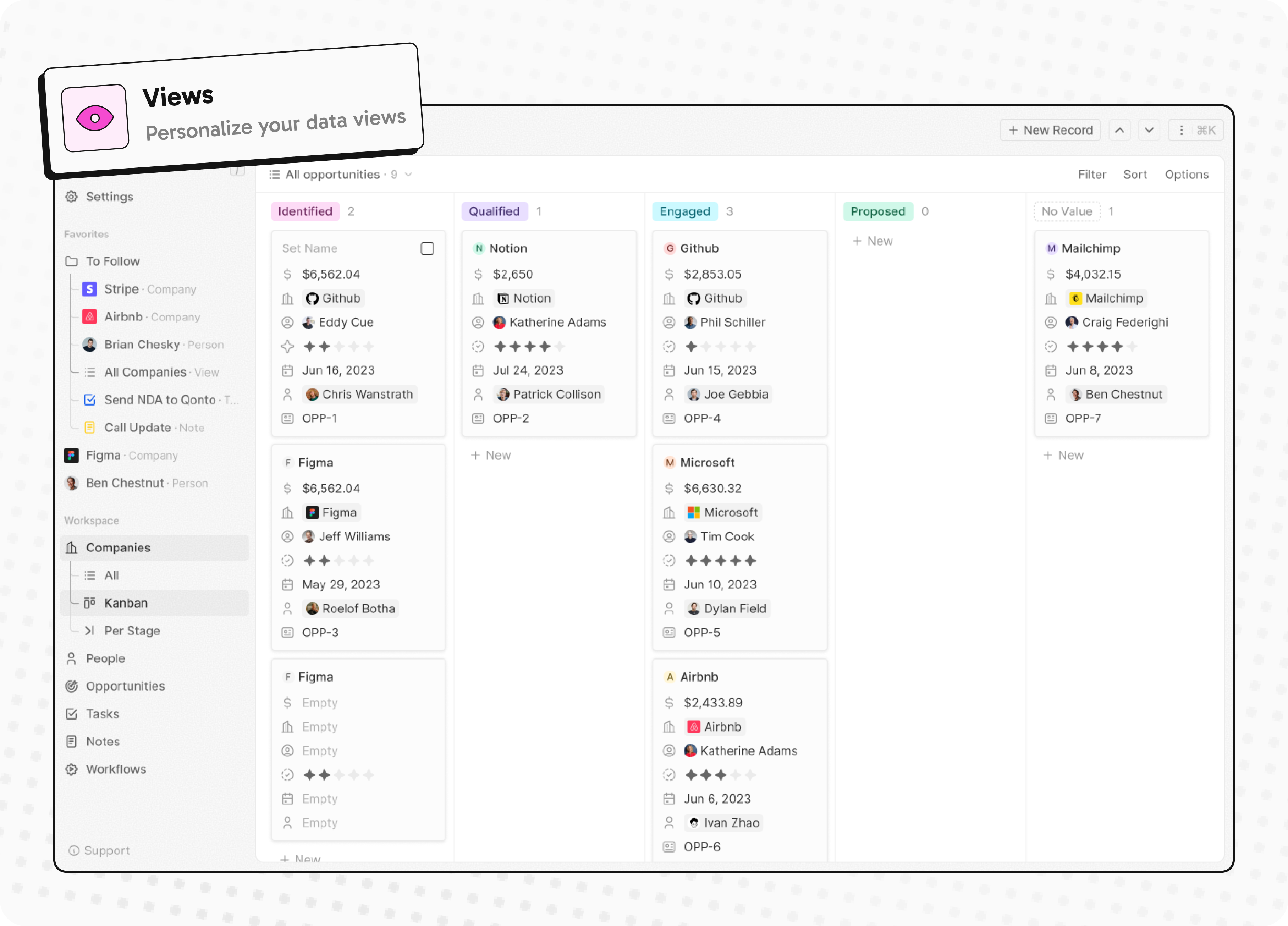

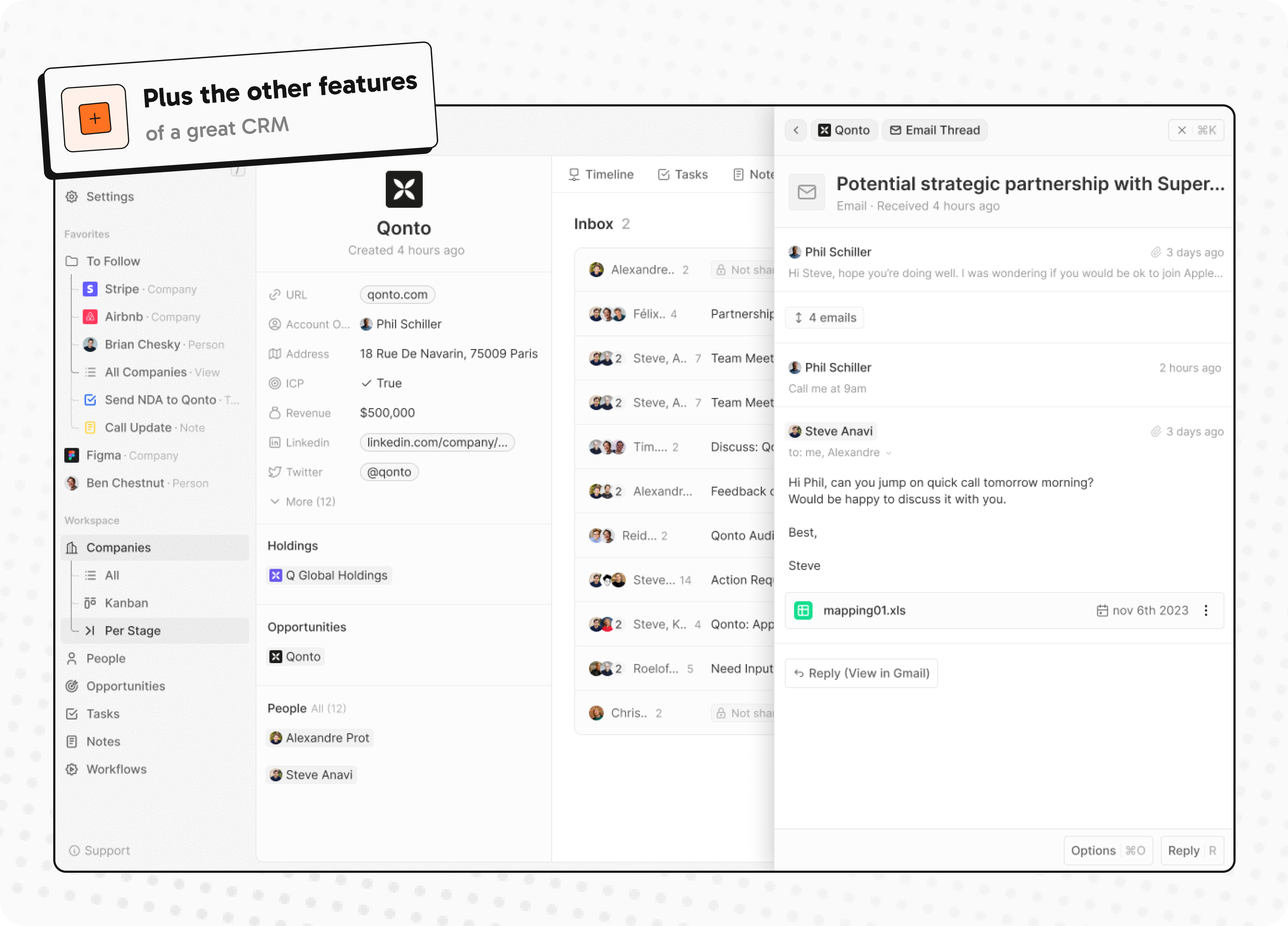

- Personalize layouts with filters, sort, group by, kanban and table views

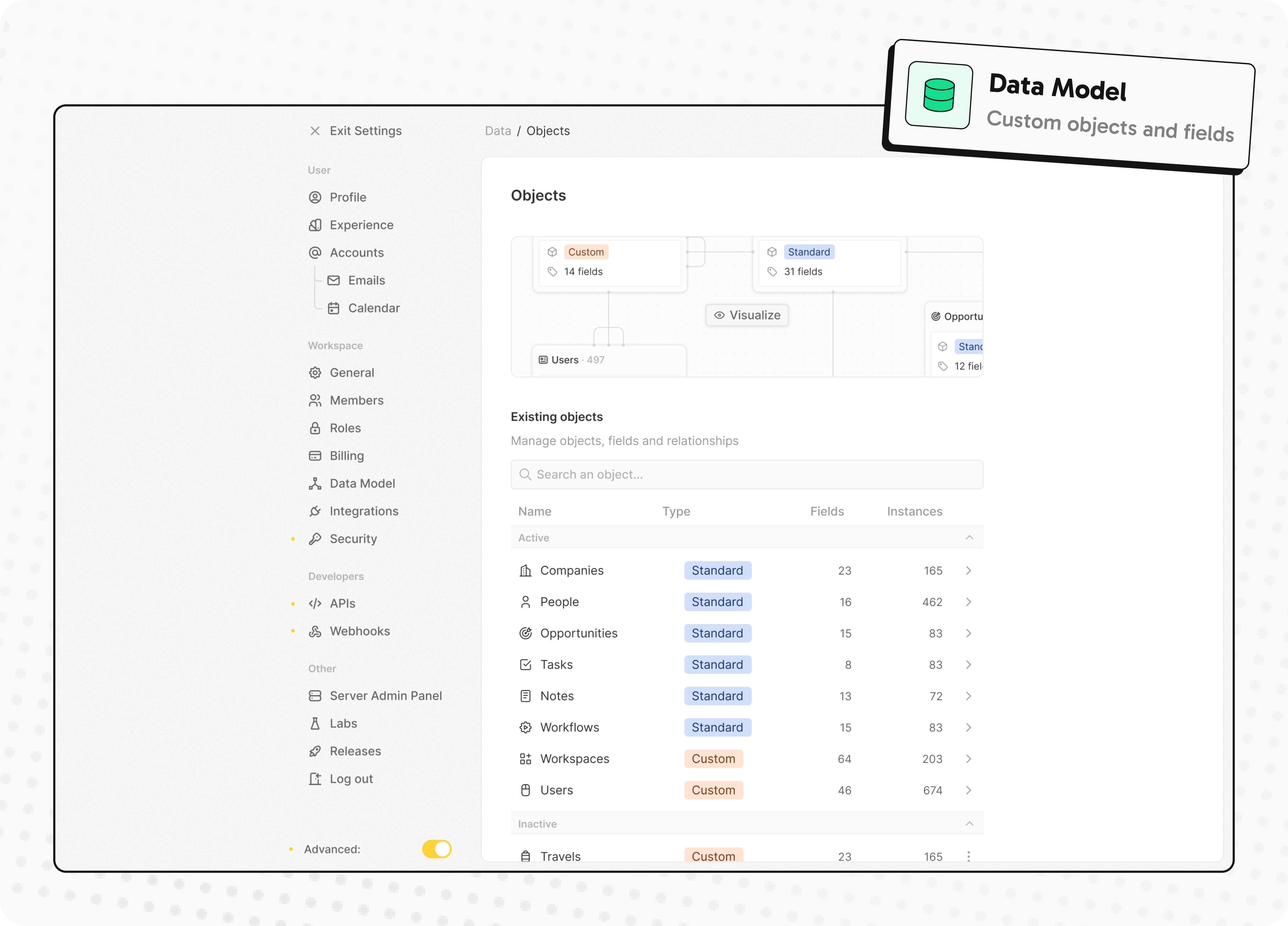

- Customize your objects and fields

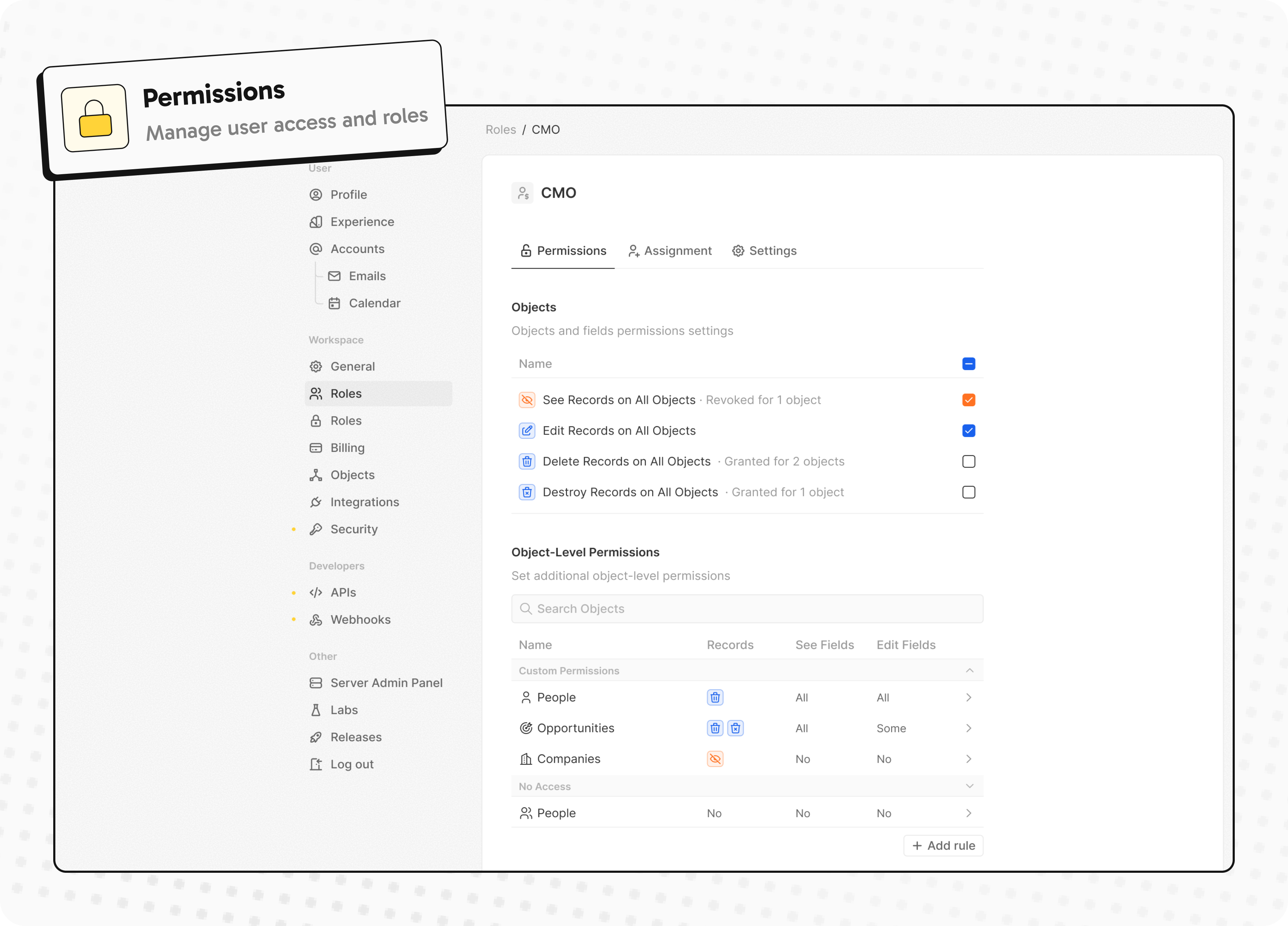

- Create and manage permissions with custom roles

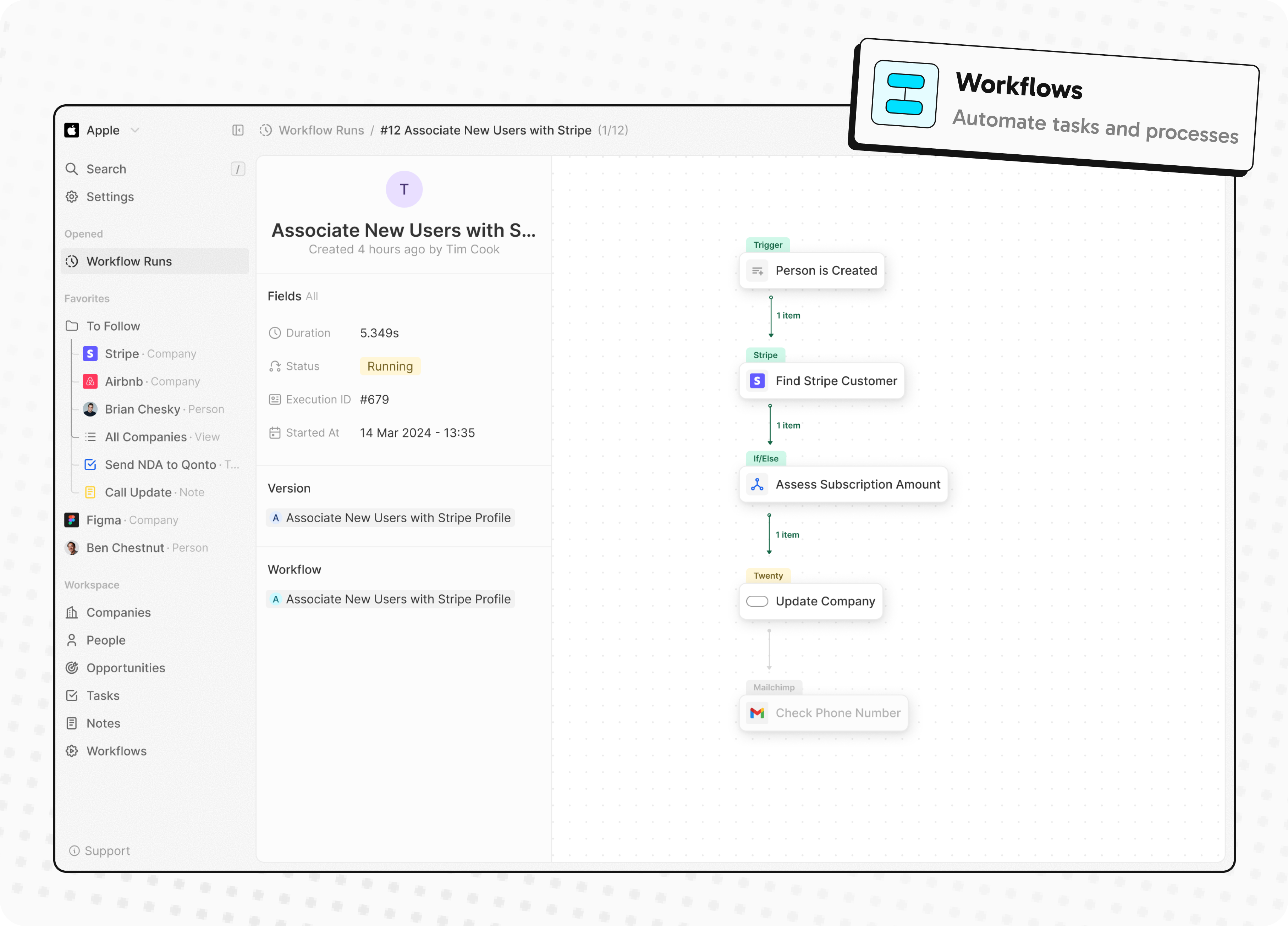

- Automate workflow with triggers and actions

- Emails, calendar events, files, and more

Personalize layouts with filters, sort, group by, kanban and table views

Customize your objects and fields

Create and manage permissions with custom roles

Automate workflow with triggers and actions

Emails, calendar events, files, and more

Stack

- TypeScript

- Nx

- NestJS, with BullMQ, PostgreSQL, Redis

- React, with Jotai, Linaria and Lingui

Thanks

Thanks to these amazing services that we use and recommend for UI testing (Chromatic), code review (Greptile), catching bugs (Sentry) and translating (Crowdin).

Join the Community

- Star the repo

- Subscribe to releases (watch -> custom -> releases)

- Follow us on Twitter or LinkedIn

- Join our Discord

- Improve translations on Crowdin

- Contributions are, of course, most welcome!